When Property Managers Get a Brain (Rot): Guardrails for using AI in tenancy law troubleshooting

Landlords hire you for your brain. And they’re about to ask if they can rent it from a robot instead.

72% of landlords who use a property manager do it because the manager “knows more about how to comply with the government regulations.” Not because you’re cheap or charming, but because you keep them out of trouble with Big Brother.

Every one of those landlords can now open a browser and ask ChatGPT to “write me a response to this Tribunal application” or “explain Healthy Homes”. AI will spit out something long, confident and competent-looking. And while it is sloppy today, it will be less so tomorrow. The hallucination will fade, but the substitution will not. And this, ladies and gentlemen, is when the value you create and the value you maintain start to part ways.

The expertise wedge is closing

Professional services live and die on asymmetry. You know things your client does not, can’t, or won’t know; they pay you handsomely for it (or, in the property manager’s case, they pay you for it). But if you don’t continue to push your capabilities upstream, AI will be the asymmetry-killer that is coming for your market share.

Gen AI takes any reasonably well-documented domain and spits out something that looks an awful lot like professional advice. Owners don’t suddenly become tenancy law experts; the perceived complexity just falls off a cliff. If it feels like a chatbot can draft a response, why would anyone pay you 10% plus GST?

This HBR article is the canary in the coal mine: If you are waiting for a flawless, god-mode AI before implementing across the business, you are already way behind. Today’s flawed version already saves time, cuts costs, and unlocks value. The question is who captures that value. If you and your owner are using the same commodity tools for the same tasks, the gains will accrue to the party with more options. And that’s usually the owner.

In property management, that plays out as:

Owners using AI to write emails, notices, and even Tribunal responses that you used to draft.

New, ultra-lean PM competitors using AI to strip headcount and undercut your fees.

Staff inside your business quietly pasting AI output into live files because “it sounded right.” Psst, it often doesn’t.

The slow leak of margin is one thing. The erosion of trust and confidence is much more severe.

Skynet is not the problem. Slop is.

We have a cute way of talking about AI failures: “hallucinations.” But here’s the thing: you can either laugh off its party trick, or you can treat it as an operational reality and strategise around it. And when it comes to tenancy troubleshooting, what you have to figure out is how to work with plausible-sounding nonsense where the exceptionally fluent and lurid half-truths can be potentially lethal.

We’ve all seen it:

Advice that treats Healthy Homes Standards as a kind of universal law of property condition, calling a non-vented dryer a “breach”, while ignoring the Housing Improvement Regulations, Building Code, and safety rules that actually govern specific issues.

Draft Tribunal responses that never mention mitigation, never explain how liability shifts when careless damage affects insurance, and never touch the questions an adjudicator actually cares about.

It’s less hallucination and more Dunning-Kruger. The dangerous bits are the things that never get said, buried inside documents that sound like they were written by a competent adult.

Two questions before you touch AI

The HBR playbook offers a simple way to sort the signal from the hype: two questions for every task.

What’s the cost of getting things wrong? Are we talking about minor annoyance or serious legal/financial/reputational harm?

What kind of knowledge is required? Do you need explicit data (i.e. statutes, dates, records) or tacit knowledge (i.e. judgment, context, relationship nuances, understanding competing perspectives)?

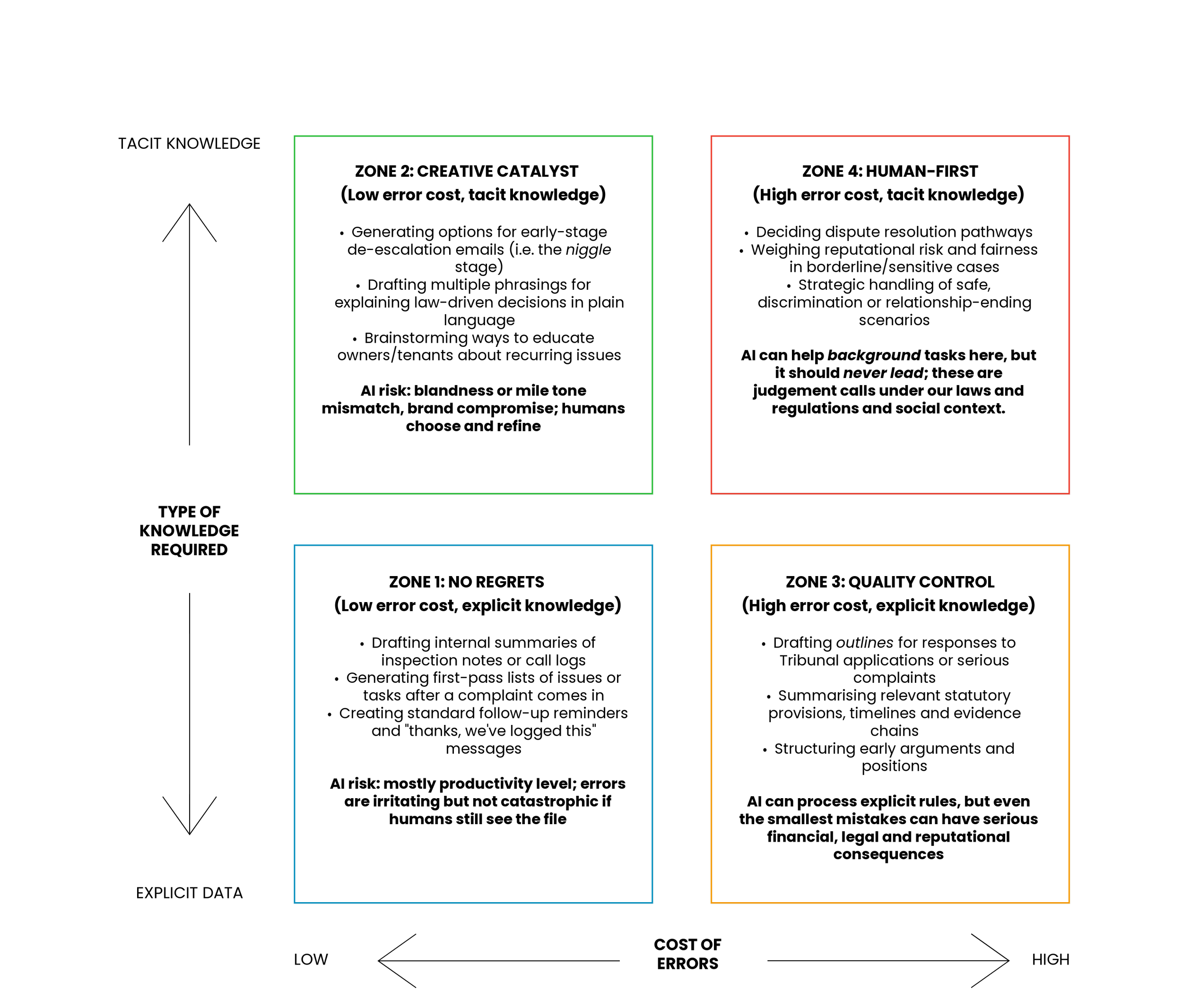

Tasks with low error cost and explicit knowledge are the natural home of AI. High error cost plus tacit judgment is where you keep humans in the driver’s seat and bolt the doors. Tenancy troubleshooting spans that whole grid. So you need a map.

The four troubleshooting zones

Here’s what the map could look like*:

A quick tour:

Zone 1: No regrets (low error cost, explicit knowledge) AI can summarise inspection notes and churn out standard ‘we’ve logged this’ messages. Someone still glances at it; no one’s reputation dies on the hill of a typo.

Zone 2: Creative catalyst (low error cost, tacit knowledge) Use AI to generate options for early de-escalation emails, plain English explainers and education content. Humans pick, edit and own the tone. At worst, you sound boring.

Zone 3: Quality control (high error cost, explicit knowledge) On paper, perfect for AI: drafting outlines for Tribunal responses, summarising statutes, structuring evidence. In reality, this is where the model’s lack of legal understanding can bite you in the you-know-where-the-sun-doesn’t-shine. I can process rules, but not identify the gaps that will kill your case. Take this approach: AI draft, humans decide.

Zone 4: Human first (high error cost, tacit knowledge) Settlement vs fight. Safety and discrimination allegations. Reputationally sensitive exits. AI can help with low-stakes background tasks like timelines and summaries, but it must never lead. These should be reserved exclusively for those in a position of responsibility.

The point of this quadrant is not to make you take a position about AI. It’s to stop you from using a chainsaw to butter toast.

The dumbest way to use AI (and the smartest)

The dumbest way to use AI in tenancy troubleshooting is also the most common:

No policy.

No guardrails.

Case-by-case experiments on live disputes.

A property manager gets a Tribunal application, pastes it into a chatbot, copies the answer into a Word document, tweaks a few words, and sends it to the owner. No review by a senior No cross-check against the Act, regulations or current Tribunal practice. You may as well call the business “The Home of Tenancy Malpractice”.

The smartest way is as boring as it is grown-up:

Decide explicitly which troubleshooting tasks fall into which zone.

Write down where AI runs solo, where it only assists and where it is banned.

Use AI to build internal RTA capability, then force a cross-check against the actual law, official guidance, and legal advice.

In other words: AI is your junior analyst, not your Head of Legal.

Guardrails for an AI-integrated PM practice

If 72% of your clients say they’re buying your regulatory expertise, you cannot afford to have unsupervised AI ghost-writing the parts of the job that carry the most risk and the most value.

At a minimum, your business needs four safety rails:

-

For each troubleshooting task, ask the two questions: cost of error, type of knowledge. High-risk tasks go to the right people, not the right prompt.

-

In Zones 3 and 4, AI can outline and summarise. It never sends, files, or commits you to a position without senior review.

-

Ban “I just asked ChatGPT and sent what it said” on anything that smells like a dispute. If AI is in the workflow, it’s in a narrowly defined, clearly documented and tactically deployed.

-

Treat AI as a teacher’s assistant that sometimes makes mistakes. It can speed up learning; it cannot replace it. Your staff will still have to read the statute, the regulations and the decisions to understand the law.

Deploying a powerful but imperfect tool means you have a responsibility to capture the upside and quarantine the downside.

A roadmap for your business

Given that every Tom, Dick and Harry has access to AI those who get to exploit its values are the ones who use it strategically. The only way property managers keep their wedge is by pushing their capabilities upstream to use AI deliberately where it is safe, and doubling down on human judgement where it isn’t.

Most property managers I talk to don’t need a 50-prompts pack to hack the RTA. They need a map, agreed upon by the people who actually carry the risk. That’s why I’ll be running an online leadership workshop on AI in tenancy troubleshooting for property management businesses: a working session where we map your troubleshooting workflow onto the four zones, identify the no-regrets use-cases, and mark the red-line areas where AI should never have the keys.

You’ll walk away with something your competitors probably don’t have yet: a clear, business-wide view of where AI is safe, where it is dangerous, and where it’s genuinely worth the effort in tenancy troubleshooting before your owners and rivals make those calls for you.

* I adapted this directly from the Anand and Wu framework.